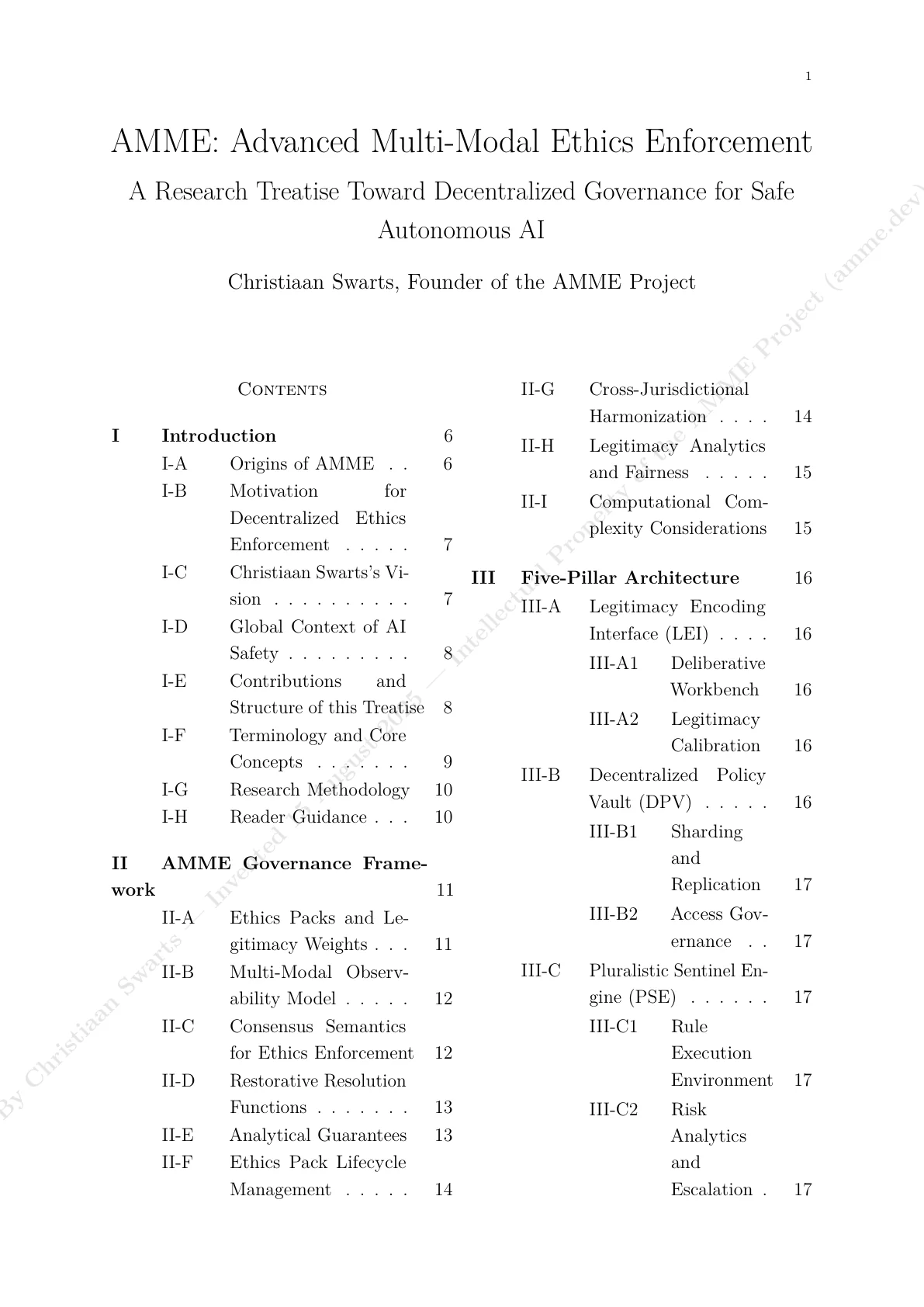

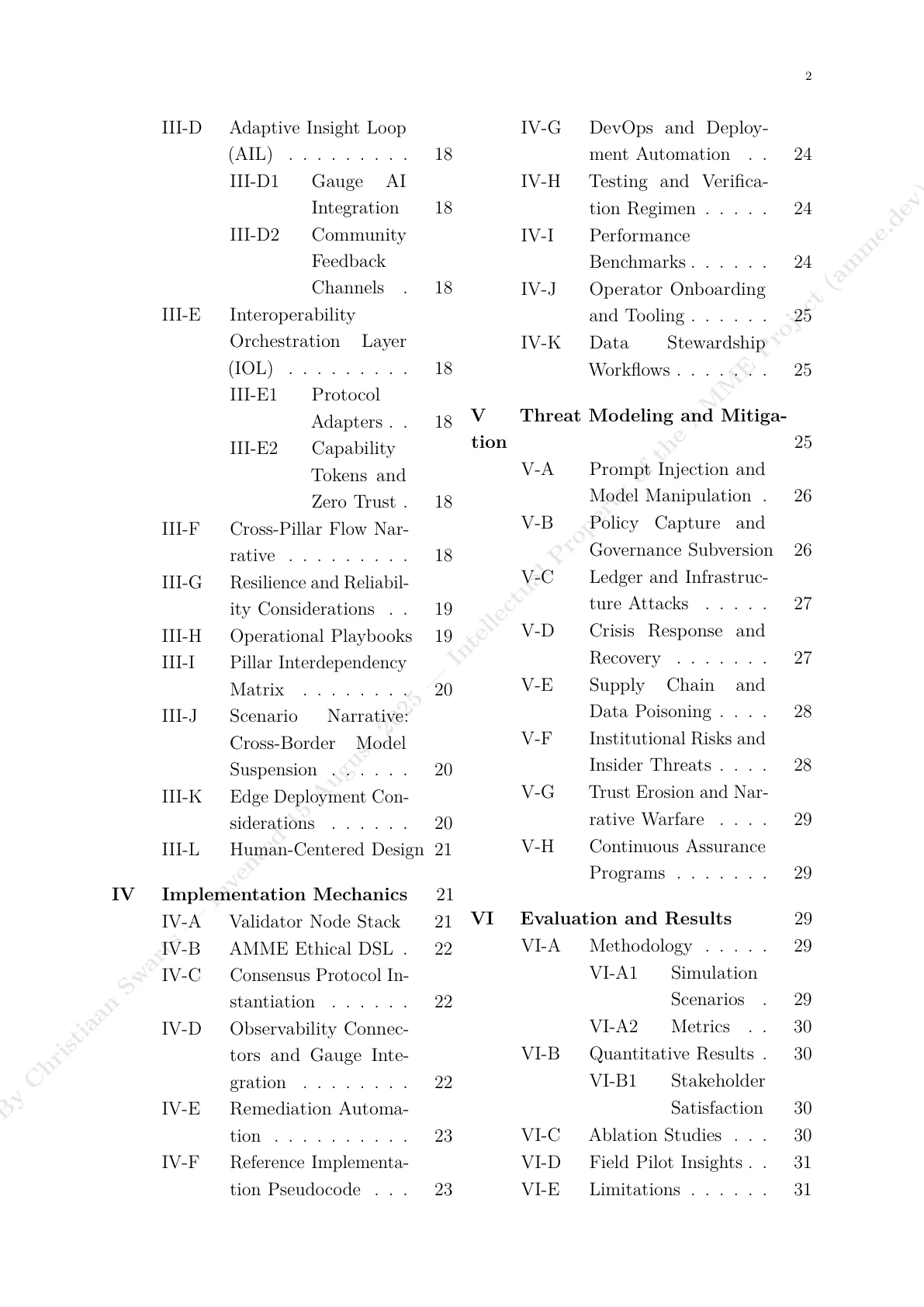

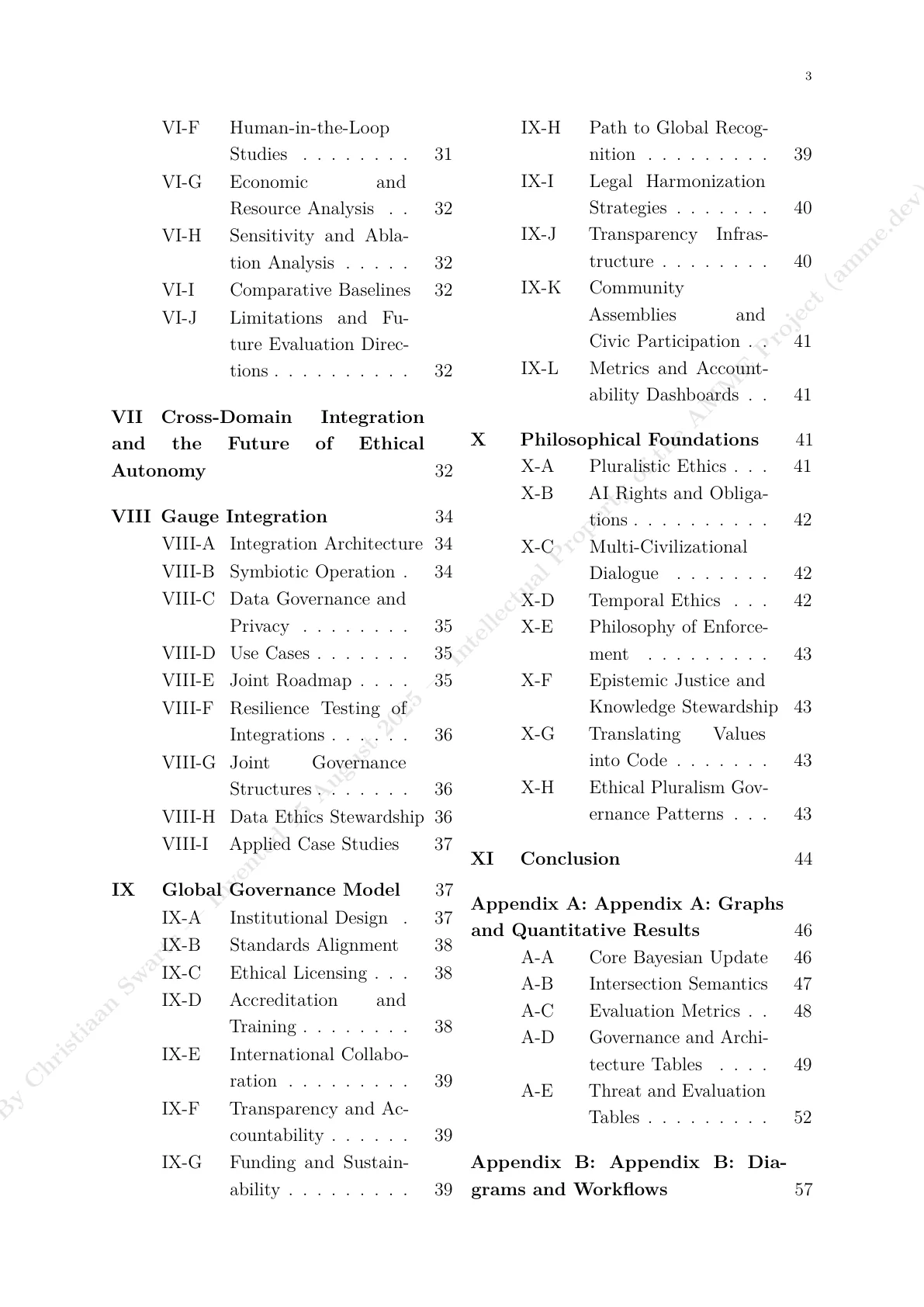

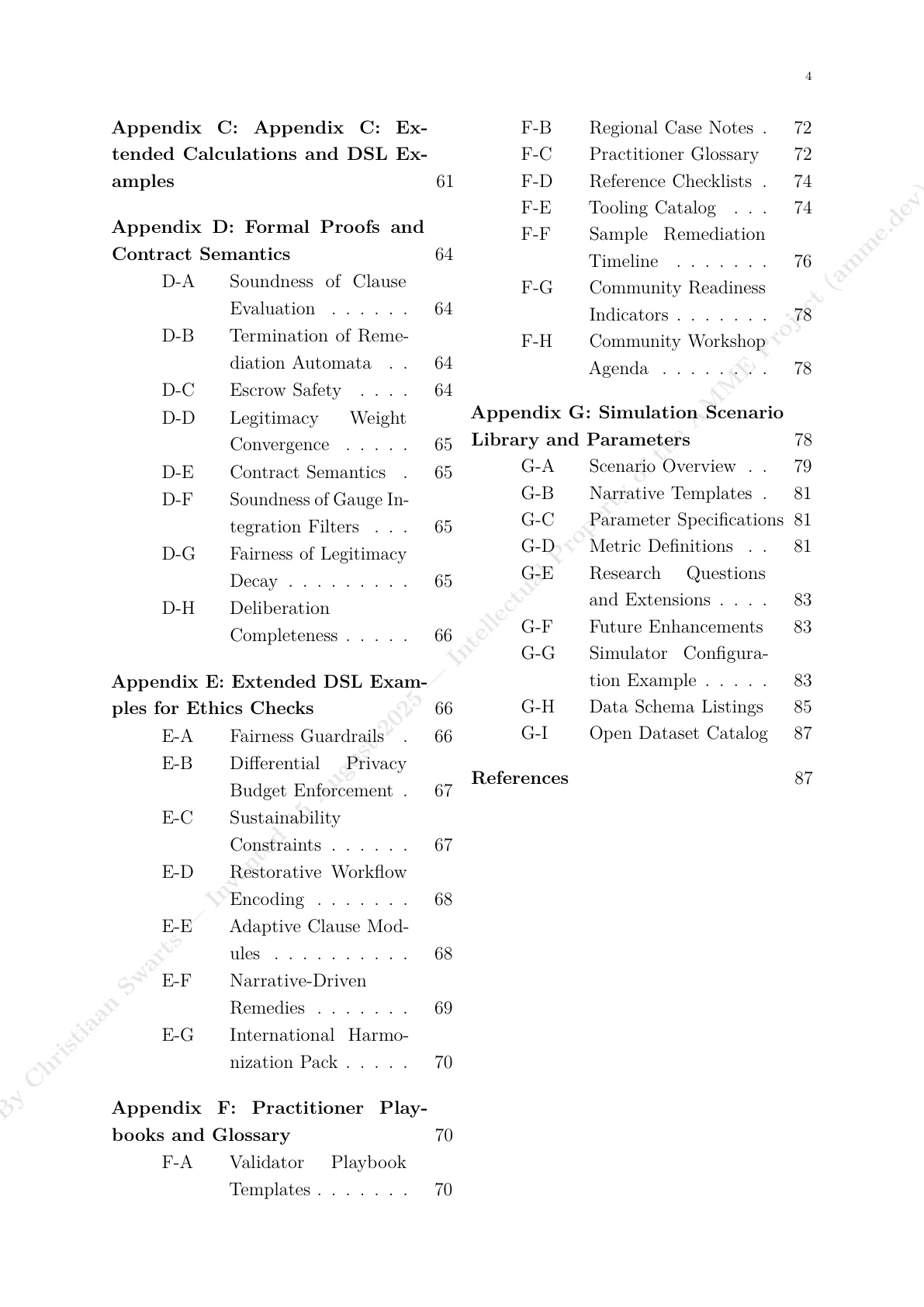

Thesis Preview

Preview pages 1-7 of the treatise below. Purchase options for the detailed summary or private delivery of the full document appear after the preview.

AMME treatise

Preview pages 1-7 below.

Purchase access

Purchase the detailed summary or request private delivery of the full treatise PDF.

Purchase detailed summary

A detailed, executive-grade synthesis of the complete thesis: architecture, governance model, evaluation program, threat model, and implementation implications.

Purchase full treatise (private PDF)

Delivered privately as a PDF after purchase (it is not published on the public site). Includes the governance framework, the five-pillar reference architecture (LEI, DPV, PSE, AIL, IOL), implementation mechanics, threat modeling, evaluation methodology, Gauge integration, global governance model, and every appendix (formal proofs, diagrams/workflows, extended DSL examples, playbooks, and glossary).

Don’t have PayPal or Stripe? Contact info@datahound.dev.

What the AMME treatise is saying

This page is a preview, plus a more detailed explanation in everyday language. The complete treatise text is not published on the public site.

The problem (simple version)

Modern AI systems don’t live in one place. They’re trained from many data sources, updated often, plugged into apps, APIs, and workflows, and used by people with different incentives. When something goes wrong, it’s hard to answer basic questions: what happened, who decided it was acceptable, what evidence was used, and what changed afterwards.

The core idea

AMME treats ethics like infrastructure: something you can test, audit, and enforce. Instead of relying only on policy documents or promises, AMME focuses on producing verifiable proof artifacts (evidence), running structured deliberation, and triggering remedies that are recorded and reviewable.

How a governance decision flows

- A signal appears. Something looks risky or harmful (a complaint, audit finding, anomaly, or incident).

- Evidence is gathered. The system produces an evidence bundle: what data, model, prompts, versions, and events were involved.

- Validators deliberate. A validator set reviews evidence using agreed thresholds and a documented process.

- A decision is recorded. The decision includes a rationale and references to the exact artifacts used.

- A remedy is applied. That can mean pausing a capability, tightening constraints, rolling back, or requiring additional safeguards.

- Everything stays replayable. Later reviewers can reconstruct the path from signal → evidence → decision → remedy.

The five pillars (explained like you’re onboarding)

The treatise organizes AMME into five pieces that work together.

LEI

Where rules become enforceable: charters, weights, and legitimacy signals that define what “acceptable” means.

DPV

Where history becomes provable: provenance, versioning, and audit trails so claims can be checked later.

PSE

Where proof becomes concrete: standardized evidence bundles and tests that can support governance reviews.

AIL

Where accountability lives: validator performance, incentives, and penalties so governance isn’t just theater.

IOL

Where actions execute: operational constraints and remediation steps that actually change system behavior.

What this means

You can argue about ethics, but you also need a system that can prove what happened and enforce changes.

What you get from the full document (privately)

The private PDF version is formatted for reading (single column) and includes the full argument, diagrams, workflows, and formal artifacts. If you need it, I can generate it locally as a printable PDF without publishing it on the website.

Governance of governance

Meta enforcement for legitimacy, transparency, and proportionality.

AMME Extensions describe a second order ethics pack that evaluates the governance process itself. It encodes invariants for representation, quorum, disclosure, and remediation proportionality. These invariants are enforced with the same mechanisms as operational policies, creating an auditable check on governance power.